Court documents reveal a man began to groom an underage girl on Roblox and convinced her to exchange cellphone numbers.

INDIANAPOLIS — A federal lawsuit was filed Wednesday against one of the most popular gaming platforms in the world, with lawyers alleging Roblox allowed a predator to exploit a 10-year-old Johnson County girl.

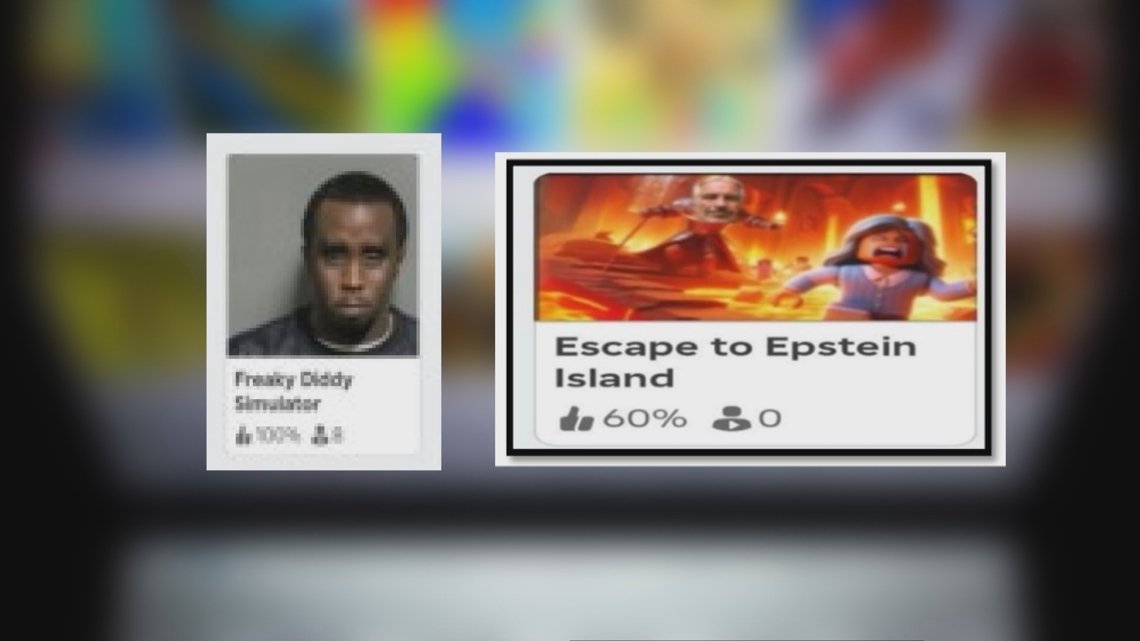

“Freaky Diddy Simulator,” “Escape to Epstein Island”

The titles above are just two examples of the thousands of mini-games created by Roblox users. According to investigators, users create virtual environments that other users can join, with some using screen names referencing infamous convicted sex offenders like rapper Sean “Diddy” Combs, who was convicted earlier this year on sex crimes, and late financer Jeffrey Epstein, who was convicted of orchestrating a sex trafficking ring involving underage girls.

Investigators say the accounts who create those virtual environments are permitted to be openly engaged in children’s games. It’s one of the many disturbing pieces of evidence, now filed in a lawsuit out of the Northern District of California, where Roblox is headquartered.

Those examples are one of the many reasons Stan Gipe, a partner with Dolman Law Group, is representing a now-11-year-old Johnson County girl and her family. The court filing identifies the plaintiff as Jane Doe.

“They all flocked to this platform”

“This is one of probably 800 to 900 similarly situated children that we represent,” Gipe told 13News. “I hate to do an analogy like this, but for pedophiles, word got out that Roblox was the good fishing ground, so they all flocked to this platform.”

The court filing reveals Doe had been an avid user of Roblox for years. Investigators say Doe’s father allowed his daughter to use the gaming platform, believing there were safeguards in place. But in 2024, it’s alleged that Doe, who was 10 years old at the time, came in contact with a man on Roblox.

Court documents reveal the man began to groom Doe, eventually gaining her trust, and convincing her to exchange cellphone numbers. It was on video chat that investigators say the man coerced the girl to undress and engage in sexual acts, which he then recorded.

“This isn’t like old days. Once this is out there, this photo never comes back,” Gipe said. “(The photos are) in Russia, it’s in China. It’s being sold to people as child pornography, which is what it is.”

“An enormous responsibility”

This lawsuit comes amid a string of other suits and claims alleging Roblox enabled sexual predators to connect with and abuse children.

Roblox’s founder and CEO says he rejects some descriptions that paint Roblox in a bad light.

“I want to highlight much of what you hear about is not happening on Roblox, and many parents aren’t aware that many other apps that kids have access to allow image sharing, allowed texting,” Roblox founder and CEO David Baszucki told CNN this week.

Baszucki says the gaming platform already has measures in place to protect young users.

“Every day, over 150 million people (come to) Roblox, and they have amazing experiences,” Baszucki said. “They learn, they communicate with their friends, they get interested in STEM. And it’s an enormous responsibility for us at Roblox. It’s why we built safety into the platform.”

New safety features

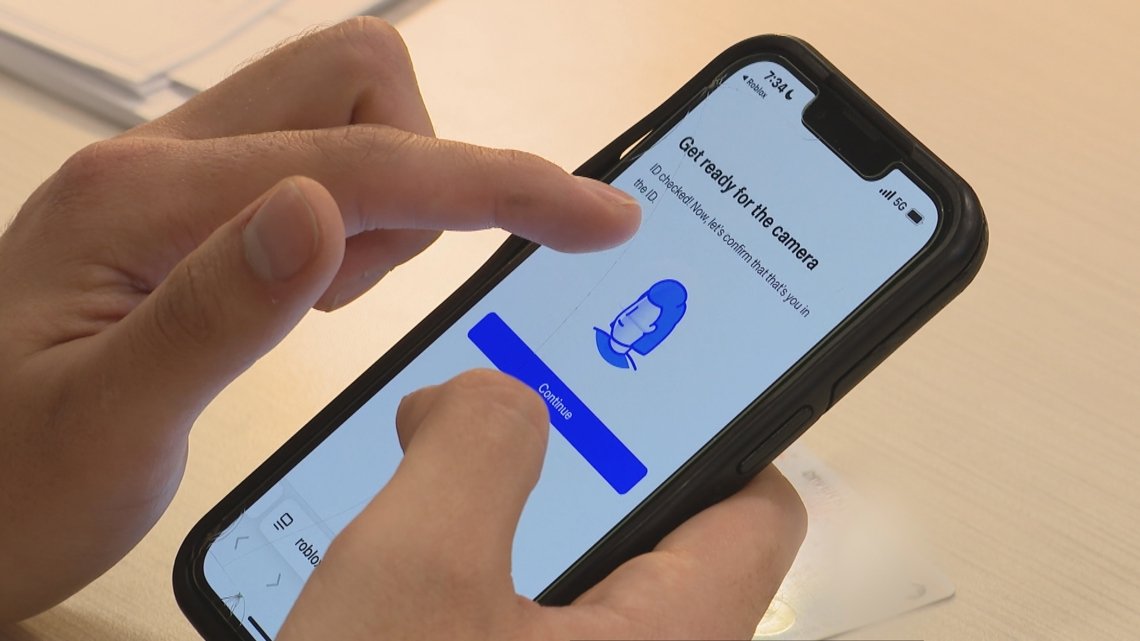

This week, Roblox rolled out a new voluntary age verification process for users who want to use chat features. Users now must provide a government ID or let artificial intelligence estimate age with a face scan.

It will become mandatory in Australia, New Zealand and the Netherlands in December, and globally in early 2026.

While Roblox says the new feature will increase the number of users verifying their age, Gipe wants parents to know it’s not just Roblox.

“Use Roblox and what you learn from looking at this stuff in this litigation to educate you about what might be going on on other platforms,” Gipe said.

In December, a federal panel will review whether this lawsuit should be grouped with almost two dozen similar cases. The decision would determine if they proceed together as a single class-action.

A spokesperson with Roblox sent 13News the following statement:

“We are deeply troubled by any incident that endangers our users. While we cannot comment on claims raised in litigation, protecting children is a top priority, which is why our policies are purposely stricter than those found on many other platforms. We recently announced our plans to require facial age checks for all users accessing chat features, making us the first online gaming or communication platform to do so. This innovation enables age-based chat and limits communication between minors and adults. We also limit chat for younger users, don’t allow the sharing of external images, and have filters designed to block the sharing of personal information.

We dedicate substantial resources—including advanced technology and 24/7 human moderation—to help detect and prevent inappropriate content and behavior, including attempts to direct users off-platform where safety standards and moderation may be less stringent than ours. We understand that no system is perfect, which is why we are constantly working to improve our safety tools and platform restrictions. We have launched 145 new safety initiatives this year alone and recognize this is an industry-wide issue requiring collaborative standards and solutions.

We encourage anyone to report content or behavior that may violate our Community Standards using our Report Abuse feature. We also partner with law enforcement and leading child safety and mental health organizations worldwide to combat the sexual exploitation of children such as the Tech Coalition’s Lantern project and Robust Open Online Safety Tools or ROOST.”