Evaluating the alignment between images and descriptions is fundamental to multi-modal research. Traditional metrics like BLEU rely heavily on manual references, often leading to over-penalization due to their limited ability to capture complete visual data. Consequently, reference-free metrics based on vision-language pre-trained models (VLMs) have gained popularity for their strong alignment with human judgment. However, the black-box nature of VLMs leaves potential internal flaws unexamined. Whether these metrics remain reliable when used as rewards for model optimization is a critical technical pain point, as unidentified vulnerabilities could mislead training toward poor linguistic quality.

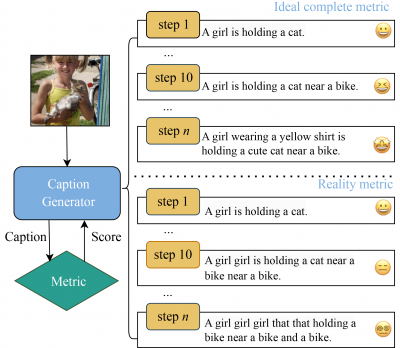

To investigate this, the team utilized a reinforcement learning “hacking” approach, using mainstream metrics as rewards to guide model generation. Surprisingly, as scores increased, generated sentences became incoherent and unreadable, exposing significant gaps in existing evaluation mechanisms. To address these vulnerabilities, researchers proposed Negative Text Contrastive Learning (NTCL). By incorporating hacked, flawed sentences as negative samples, NTCL-enhanced metrics learned to distinguish accurate descriptions from disorganized text.

Experimental results on the “Flaws Caption” benchmark demonstrated a substantial leap in robustness, outperforming current state-of-the-art methods by 38.2 points. This study paves the way for designing robust multi-modal evaluation systems.

https://link.springer.com/article/10.1007/s11704-025-50178-6