As someone working at the intersection of cybersecurity and public sector technology, I’ve long respected the Essential Eight framework developed by the Australian Cyber Security Centre (ACSC). It’s practical, actionable, and has helped lift the security posture across government agencies and critical infrastructure. But the world has changed. And so must our approach.

In this short article, I’m hoping to start a conversation and offer some practical ideas for how we can evolve the framework in a way that keeps pace with AI-driven threats while preserving its core strengths.

This article is not about discarding what works. But about building on it. The Essential Eight has been a cornerstone of cyber hygiene in Australia. But in a world of AI-powered threats and AI-dependent systems, we need to ask: Is it enough? And how do we evolve it while keeping it practical and widely adoptable?

The core message: Why the Essential Eight needs to evolve

The Essential Eight was designed for a threat environment dominated by conventional malware, phishing, and privilege escalation. Today, attackers are using AI to:

- Rapidly generate polymorphic malware.

- Craft highly convincing phishing at scale.

- Bypass traditional application controls.

- Target AI models and the data that feeds them.

Meanwhile, governments are increasingly considering using AI to support making decisions, managing infrastructure, and delivering public services making those systems targets in their own right.

If we’re going to defend in this new era, we need to update the playbook.

The new AI landscape

AI is not just reshaping how we work. It’s reshaping how attackers operate. We are all seeing:

- AI-generated malwares that can mutate faster than signature-based tools ability to catch.

- Social engineering campaigns scaled by generative language models.

- Deepfakes that mimic trusted identities.

- New attack surfaces across machine learning models and data pipelines.

The Essential Eight was not built for this reality. I’m sharing this to spark a conversation:

How do we evolve the frameworks we trust without losing their simplicity or clarity?

I don’t have all the answers, but I believe this is the right time to ask better questions.

- How do we modernise our most trusted frameworks without overcomplicating them?

- What is already working that we can learn from?

- What risks and opportunities do you see in applying AI to both defence and offense?

If you work in or around cyber strategy, government systems, or critical infrastructure, I’d love to hear your input. There’s an opportunity to collectively evolve the frameworks that keep our systems safe.

1. A Proposal: Expanding to an “Essential Ten”

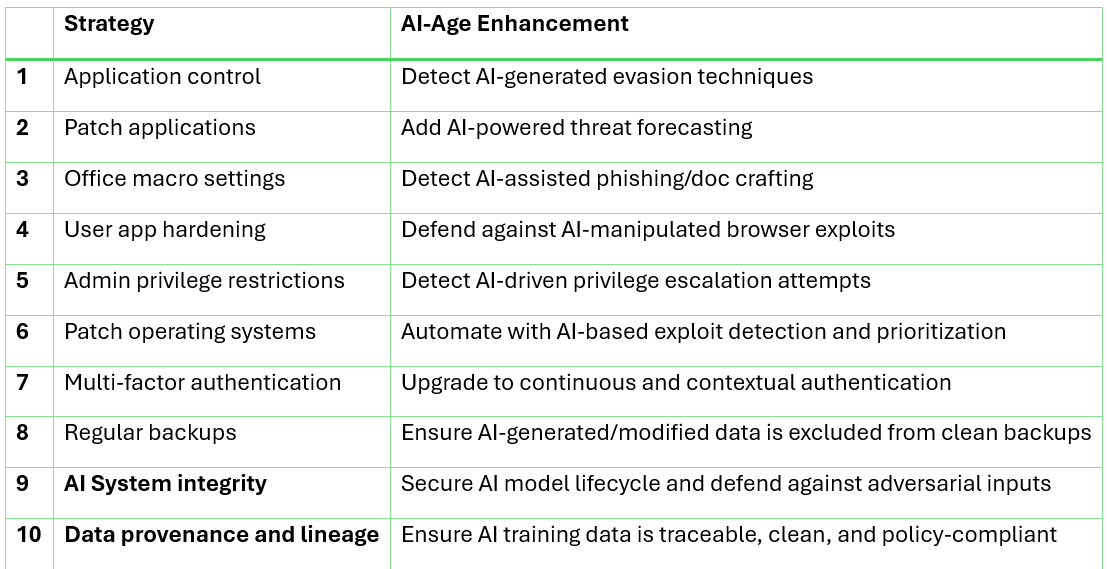

To meet the challenges of the AI era, I believe we need to expand the framework to include two new strategies. They’re extensions of core security principles adapted to a new class of assets: AI models and training data:

1. AI system integrity: As government agencies deploy AI to support decision-making, fraud detection, or service delivery, we must secure the models themselves. That means testing for adversarial inputs, monitoring for drift, securing model pipelines, and validating training data.

2. Data provenance and lineage: AI systems are only as trustworthy as the data they learn from. Without proper tagging, lineage tracking, and origin checks, we risk training sensitive systems on poisoned, biased, or unauthorized data.

These aren’t just IT hygiene issues. They’re national security concerns.

2. Enhancing the original Eight to be AI-Aware

Every one of the existing Essential Eight strategies can (and should) be updated to account for AI-enhanced threats. Keeping the “essential” truly essential but making it current.

For example:

- Application control must detect evasive, AI-generated binaries.

- Multi-factor authentication needs to go beyond passwords + SMS to include continuous authentication, behavioural biometrics, and phishing-resistant tokens.

- Backup strategies must include validation to ensure AI-corrupted data hasn’t silently made its way into recovery points.

- Patch management should leverage AI-driven threat forecasting. Not just CVSS scores.

Example: Enhanced Essential Eight evolving in the age of AI

3. Move from static compliance to continuous assurance

In an AI-driven threat environment, annual audits or static checklists aren’t enough. We need realtime, automated, AI-assisted validation of security controls. This allows us to detect gaps before they become breaches and better allow responding in hours, not weeks.

4. Continue supporting a shared AI threat intelligence fabric

Government and critical infrastructure sectors can easily become siloed. We need to continue supporting a secure, cross-agency intelligence sharing network that can use AI to correlate signals and early identify threats without compromising data privacy.

This might include federated learning approaches, realtime telemetry sharing, or red/blue teaming with AI agents.

5. Make AI security a core part of cyber culture

We must build AI security literacy into the culture of cybersecurity teams. Defending against AI-powered attacks and protecting AI systems requires new knowledge and skills. This includes understanding adversarial ML, data poisoning, model inversion, and much more.

We need to train for the world we’re entering, not the one we’re leaving behind.

6. Make the Essential Eight framework collaborative and open (Within trusted bounds)

Today, the Essential Eight is centrally managed. While that ensures consistency, it can also limit agility.

What if we opened it up (at least domestically) for contribution by trusted experts across government, industry, and academia?

A secure, transparent model for collaborative evolution similar to opensource software but with tiered review and approval. This could help:

- Tap into the collective intelligence of the cybersecurity community.

- Respond to emerging threats faster.

- Build shared ownership over a framework that protects us all.

In the age of AI, frameworks can’t remain static. We need mechanisms to evolve in realtime.

Ghaith Kayed has 20 years of experience delivering AI, IoT, and analytics programs across Australia, New Zealand, UK and the USA. Article originally published here.